At what point does your brain perceive sounds as music?

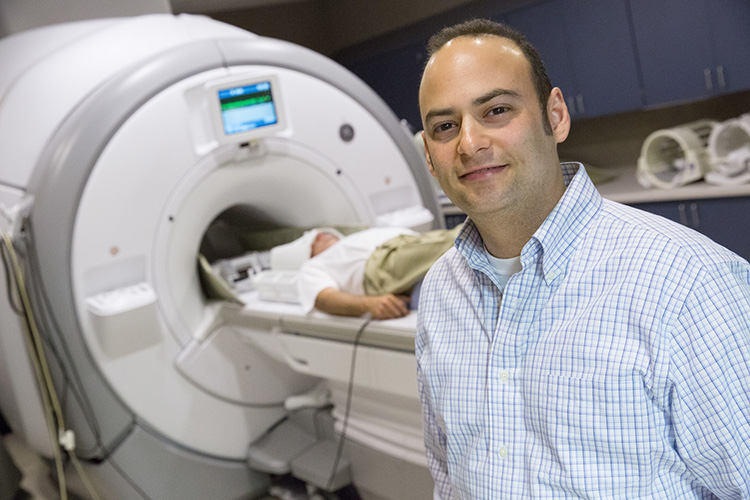

In an effort to find out what draws our attention to certain sounds, including music, Adam Greenberg, an assistant professor of psychology at UWM, is using a type of brain imaging called functional magnetic resonance imaging, or fMRI, which shows researchers which parts of the brain are active during a task. Backed by a UWM Research Growth Initiative grant, he is teasing apart how the brain recognizes music and our response to it.

How did you get started in studying music and the brain?

I’m primarily interested in how the brain processes objects and filters out objects when necessary. Objects can be visual, but also auditory. So the sound of my voice, we would call that an auditory “object” because it’s a potential focus of attention, as opposed to the sound of traffic noise outside.

The famous example of this is the “cocktail party effect,” where you need to filter out all of the background noise that so you can focus on conversing with the person who’s next to you. However, if someone on the other side of the room says your name, all of the sudden it diverts your attention.

If we observe that music has qualities that are different from just any random sound, then how do you go about finding out what those qualities are?

Music is difficult to describe because the whole is greater than the sum of its parts. The question that we set out to answer is, “If we make changes to very low-level auditory properties – the parts – will that change someone’s perception of music – the whole?”

When you say low-level auditory changes, what are you talking about?

The features of the sounds themselves – not the melodies. For this study, we explicitly stayed away from using pieces of known music because we didn’t want that to influence how subjects perceived the sounds. Instead, we randomly generated melodies using simple sequences of 10 pure tones as stimuli. To my knowledge, no one has done a study like this before.

Then we manipulated three qualities of the stimuli: One was the amplitude, or the loudness, of the tones in this sequence. Another was how sharp the notes were. When you hear a note, it can be an abrupt “ta,” or it can fade in, more like a “waaaa.” And the third thing we manipulated was timbre, which is the difference in how the same note sounds when it’s made by a flute as opposed to a piano, for example.

Tell us about the experiment.

We presented these stimuli to a group of subjects, and we asked them to rate them on a scale of one to five as to how musical they were. The first thing we noticed was that, across subjects, there was a surprising amount of agreement between which stimuli were considered musical and which were not. So right away, we knew we were on to something.

Now you have a group of melodies and where they fit in on a scale of musical to nonmusical. Then what?

Then we brought in a new set of subjects and asked them to rate both the original base melodies, along with the modified melodies, from most musical to least.

We found that our manipulations changed the way that the subjects rated these melodies. When the loudness of the stimuli was increased, participants rated them as more musical than their baseline counterparts. The other two manipulations – where we changed the notes’ sharpness and the timbre – made those melodies sound less musical to our subjects.

So what does all this mean?

I think it means that to make judgments about what is musical requires two networks in the brain – those involved in processing music and those involved in processing low-level features of sound.

Here’s where the brain imaging comes in. We’ve shown that the two networks do partially overlap in perceiving music. That’s important because it was never thought that the two networks influenced one another directly.

And, what’s more, we also are finding that some of those brain regions that process the basic properties of sound are shared with regions that are involved in processing low-level properties of visual information. So basic “object” perception in your brain may be happening across multiple domains simultaneously.

What could be happening when the activity of sight and sound regions overlap?

The finding has implications for the kinds of things that we sometimes experience, like when you’re listening to music and you get visual imagery popping into your head or feelings of wanting to dance. The idea is that the experience of music may be much more than just an auditory phenomenon.

The kinds of manipulations that we’re making in the auditory domain are very parallel to the things that we’re doing in the visual domain. And I’m hoping it will someday lead to a better understanding of simultaneous audio-visual processing.